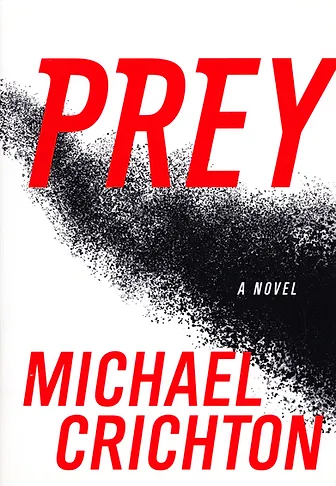

Prey

In His Own Words

In the case of Prey, I was interested in knowing where three trends might be going—distributed programming, biotechnology, and nanotechnology.

As a concept, nanotechnology dates back to a 1959 speech by Richard Feynman called There’s Plenty of Room at the Bottom. Forty years later, the field is still very much in its infancy. But practical applications are starting to appear.

Nanotechniques are already being used to make sunscreens, stain-resistant fabrics, and composite materials in cars. Soon they will be used to make computers and storage devices of extremely small size.

And some of the long-anticipated “miracle” products have started to appear as well. In 2002, one company was manufacturing self-cleaning window glass; another made a nanocrystal wound dressing with antibiotic and anti-inflammatory properties.

Synopsis

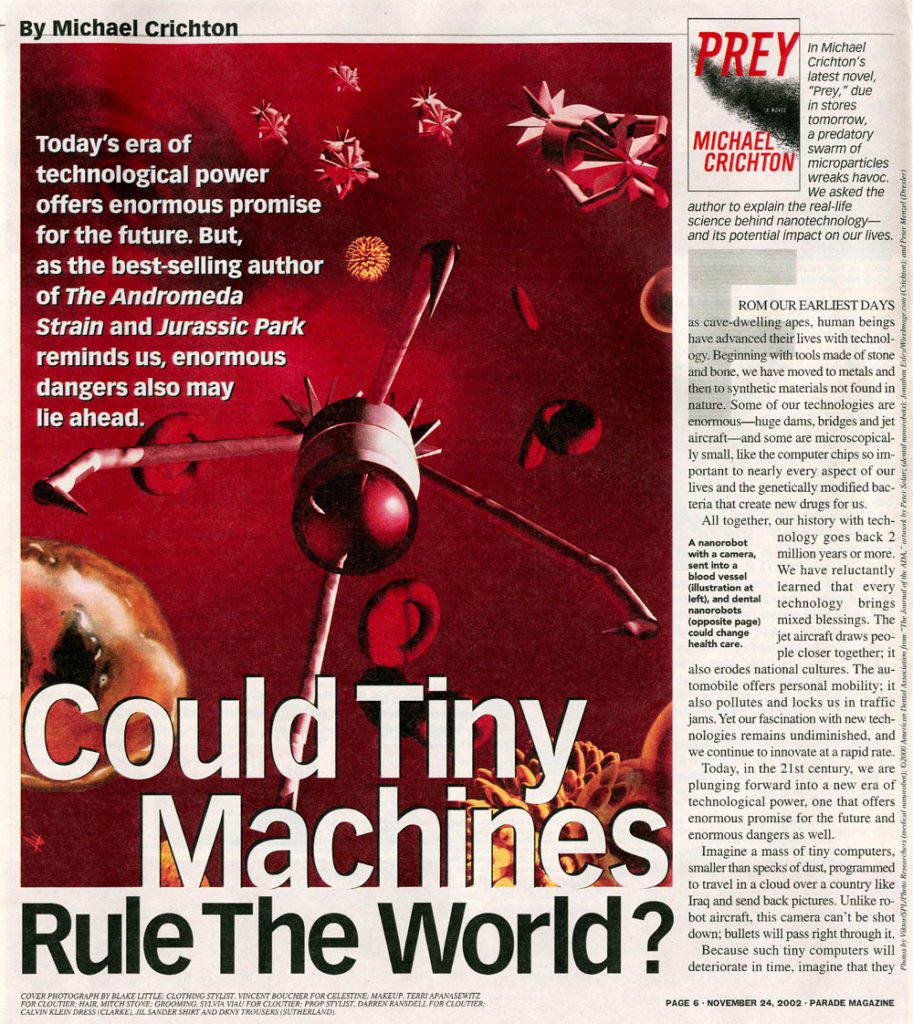

In the Nevada desert, an experiment has gone horribly wrong. A cloud of nanoparticles — micro-robots — has escaped from the laboratory. This cloud is self-sustaining and self-reproducing. It is intelligent and learns from experience. For all practical purposes, it is alive.

It has been programmed as a predator. It is evolving swiftly, becoming more deadly with each passing hour.

Every attempt to destroy it has failed.

And we are the prey.

From the Archives

In 2002, Michael Crichton wrote an article for Parade magazine that coincided with the release of his novel, Prey. In the following excerpt, Michael speculates about how nanotechology could change our world:

“These organisms will be created by nanotechnology, perhaps the most radical technology in human history: the quest to build man-made machines of extremely small size, on the order of 100 nanometers, or 100/billionths of a meter. Such machines would be 1000 times smaller than the diameter of a human hair. Experts predict that these tiny machines will provide everything from miniaturized computer components to new medical treatments to new military weapons. In the 21st century, they will change our world totally.

The potential benefits are spectacular: Tiny robots may crawl through your arteries, cutting away atherosclerotic plaque; powerful drugs will be delivered to individual cancer cells, leaving other cells undamaged; teeth will be self-repairing. Cosmetically, you will change your hair color with an injection of nanomachines that circulate through the body, moving melanocytes in hair follicles. Other nanomachines will lighten or darken skin color at will, removing blemishes, birthmarks and liver spots in the process; still others could cleanse the mouth and eliminate bad breath. Nonsurgical nanoprocesses could even perform liposuction and body reshaping. They also will repair knees and spines.

Living spaces will be transformed, with self-cleaning dishes and carpets and permanently clean bathrooms. Windows will lighten or darken at will; programmable paint will change color. You can walk through the walls of your house, since they are composed of particle clouds. Your personal computer and your watch will be painted on your arm. Temperature-sensitive clothing will loosen when it gets hot, insulate when it gets cold.

In this vision of the future, roving nanomachines will convert trash dumps to energy; solar nanomachines will coat your house, generating electricity; flexible nanomachines will provide earthquake protection. It even may be possible to move your house on the backs of millions of nanomachines, creeping across the lawn.”

Prey was #1 on the New York Times Bestseller List for 4 weeks.

Q&A with

Michael Crichton

Of all the disturbing subjects you’ve explored in your career, is it these small, insidious things that concern you the most? Because the problem in Prey, as in The Andromeda Strain, seems more tangible, more of a problem that we all need to deal with and think about now, then the problem of, say, revenant dinosaurs?

I don’t think in this way. I tend to write books that grab me by the throat and force me to write them. I don’t usually feel as if I have a choice, or much control of what comes out. Often, I don’t want to be writing a particular book, but there I am, writing it anyway.

We read Prey as a Frankenstein for our times. Like Mary Shelley, you speak to the eternal debate between the humanist and the scientist — the problem being that humanity is defined in large part by its mastery of science (going back to the taming of fire) but can also be undone by science, as the twentieth century made abundantly clear. Do you believe that it is the moral obligation of a writer with your scientific background and powers of elucidation to come down more on the humanistic side of this debate, as you seem to do in Prey?

No. As CP Snow indicated so well in “Two Cultures,” the problem is not to come down on one side of the debate or the other. The problem is to be able to deal with both sides at once. We are, as a society, tremendously dependent on science and technology. I would long ago be dead if I had lived in an earlier time. So there is no going back. At the same time, the creators of technology often do not seem to be as concerned about the effects of their work as outsiders think they ought to be. But this attitude is changing.

Just as war is too important to be left to the generals, science is too important to be left to the scientists. But in recent years so-called humanistic criticism has been incredibly ill-informed and, frankly, rather fantastical. (I am speaking particularly of post-modern criticism.) Scientists aren’t going to listen to people who have no idea what they are actually doing, or to those who scare the public with absurd risks.

As for science changing the definition of humanity, that horse left the barn long ago. Planning a hip replacement when you’re older? Implanted pump to deliver medications? How about a PDA to carry information in your pocket? A cell phone to link you around the clock, around the world. A pill to relax you, another to pick you up. Jet planes to carry you in comfort quickly to any spot on the planet. And of course with freedom from disease, from vaccination and pharmaceuticals, once you get there. And a handheld GPS to tell your location within inches.

Not so long ago, parents did not name their children for a while, because so many of them died young. Often they posed for pictures with the dead infant, before it was buried. Hawaiians didn’t celebrate the birth of a child until it was a year old—a custom still followed today. Not so long ago, one woman in six died in childbirth. Being “human” included these facts of life.

All that’s changed, of course. And in doing so, it’s changed the definition of what is human. What our lives are like, what our expectations are like, at least in the industrialized countries of the world. Nobody’s complaining about that part of the impact of science on humanity.

Did you write Prey, in part, with a younger readership in mind? Since it is the next generation that will have to tackle head-on the issues that surround the convergence of nano-, bio-, and computer technologies? What is your recommended reading list for a young person with a scientific bent? Prey argues powerfully for studying ethics (as business school students are being made to do after an outbreak of corporate scandals).

I’ve always had a lot of younger readers, and I hope they like this book, too. But I don’t really write with anybody in mind. Prey has a reading list at the back of the book, which will give any interested reader a place to start.

But it is true that the younger generation will be faced with the problems of self-reproducing technologies in a way we haven’t had to deal with yet. I think they’ll be up to the challenge.

How do you stay informed about current and cutting-edge technology-is it primarily a tremendous amount of reading on the subject or are you also actively involved in the scientific community?

Primarily reading. Talking to experts has advantages and disadvantages. Many times sensible experts are not inclined to speculate. After all, scientists are in effect trained not to do that. And there are other people who speculate wildly. That’s not necessarily helpful, either.

So I find that reading is, for me, the best way to keep up.

How is it possible that computers or man-made technological devices could ever “think” for themselves? Aren’t they limited to the programming input of humans?

No, they’re not. So-called multi-agent programming creates large numbers of virtual agents inside the computer, and lets them interact to produce a result. Sometimes the agents are directed to cooperate to achieve a goal; sometimes they compete; sometimes they do both. But the ultimate behavior of all these agents is unpredictable. And often lifelike in its appearance.

Whether this constitutes the ability of a program to “think for itself” is largely a matter of definition. Nobody knows how we think, anyway.

Is the fulfillment or satisfaction you get from the solitary endeavor of writing books different from the fulfillment from a collaborative endeavor like producing a film or TV program?

Yes, but they both have their unpleasant aspects. Writing a book, you get to have things exactly as you want them, but you are often struggling with yourself, which is a very hard thing to do. And you’re alone a lot of the time, which is fine with me, except that eventually I start to be very silent in public settings and I find I’ve lost my ability to do small talk. (I never had much ability at that, anyway.) So in a way, writing is anti-social. But when the book is done, it’s your book-good or bad, right or wrong, it’s your own work. And that can produce a feeling of satisfaction.

Collaborative work in film or television is the reverse. You never get to have things exactly as you want them, and you are always struggling with other people-which is easier than struggling with yourself, but not necessarily more fun. The finished project is never entirely yours, even if you are the director and writer. After so many years doing collaborative work, I’ve gotten used to the way it goes. And sometimes it is incredible fun. So you take the good with the bad.

Book Covers